At the cutting edge of Technical Communication: Conference program 2026

Discover the top-notch, wide-ranging program of Information Energy 2026! Download the program 2026 as PDF.

Information Energy 2026 – Shaping the Future of TC in the Age of AI

A Successful Online Event

The Information Energy 2026 online conference, hosted by tcworld GmbH, took place from April 22 to 24 and successfully brought together the global TechComm community in a fully virtual format. The event welcomed more than 300 professionals from around the world, confirming its role as a truly international meeting point for technical communication and knowledge work. Attendees joined from 27 countries, including Germany, Austria, Switzerland, the USA, Canada, Australia, Japan, India, China, Korea, and many across Europe and beyond such as Romania, Bulgaria, Hungary, the Czech Republic, Ukraine, Great Britain, Ireland, Finland, Norway, Sweden, Denmark, the Netherlands, Belgium, France, Spain, Portugal, Italy, Israel, and Turkey.

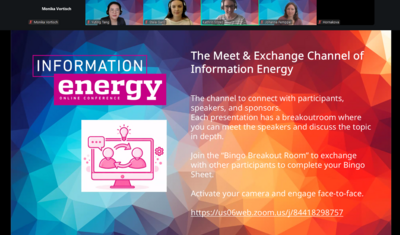

Across the three days, a dynamic program of more than 35 highly engaging sessions explored current and future trends in technical communication, software documentation, technical translation, and AI. Around 40 top speakers from all over the world contributed their expertise through a rich mix of technical presentations, hands-on workshops, tutorials, meetups, exhibitor showcases, and panel discussions, ensuring a varied and practice-oriented experience for all attendees. The conference was hosted via Zoom, making it easily accessible and enabling seamless participation from professionals across different time zones.

Roundabout 300 professionals joined exploring current and future trends in Technical Communication. The global TechComm community came together to exchange ideas, share experiences, and reflect on how rapidly evolving technologies are reshaping the way we create, manage, and deliver information. Each session added a unique perspective, ranging from AI-driven transformation and structured content strategies to collaboration models and future-ready documentation practices.

Here’s a look back at the key moments and highlights that made the conference a success.

Kick off: Welcome & Connect

The event started with a strong sense of anticipation, bringing together attendees from a wide range of industries who were eager to learn from leading experts and connect with fellow professionals across the global TechComm community. The first day opened with a warm welcome from the Information Energy event team, who set the tone for the conference and expressed their appreciation for everyone joining the online gathering.

In their opening remarks, the team thanked attendees for taking the time to be part of the event and wished everyone a successful and enriching conference experience. They encouraged attendees to enjoy the sessions, engage actively, and take away valuable insights for their professional development and careers. The message emphasized both gratitude and inspiration, highlighting the importance of shared learning and community exchange in the field of technical communication.

From Structured Content to Intelligent Information Systems: Human Expertise in the Age of AI

The first day of Information Energy revolved around a shared transformation theme: how technical communication is shifting from static documentation toward structured, AI-driven, and increasingly agentic information systems, where content must be designed for both human understanding and machine reasoning.

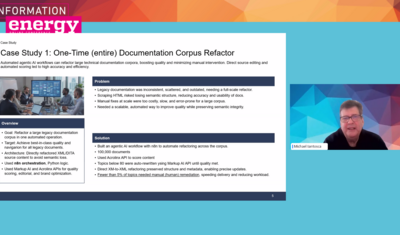

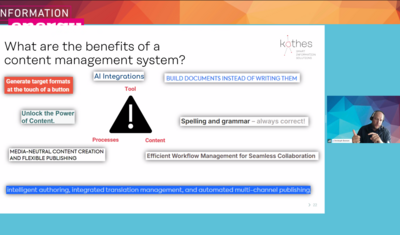

Sebastian Göttel in The Technical Writer is Dead, Long Live the Technical Writer! emphasized that rich metadata and deep contextualization are essential for reliable AI outputs, especially as users increasingly expect fast, conversational access to information. This shift makes structured systems like CCMS indispensable for controlling context and reducing AI errors, while Felix Burth in his contribution compared the development to early industrialization, where automation reshapes not only tasks but entire roles. In this view, new responsibilities such as context engineering, quality architecture, and AI operations emerge, all centered on managing structured knowledge in AI-driven environments.

Marianne McGregor in From Reverse Prompt Engineering to Context Engineering: Control and Optimize Generative AI Outputs demonstrated that improving AI results requires both analyzing outputs through reverse prompt engineering and deliberately designing the broader context in which AI operates. This includes structured inputs, grounding in reliable sources, and system-level controls that shape behavior. In a closely related approach, Rahel Bailie and Dr. Harald Stadlbauer in Using Semantic Schemas, Structures, and AI to Improve Results From LLMs showed how semantic schemas and structured content significantly improve the accuracy and usability of AI-generated outputs.

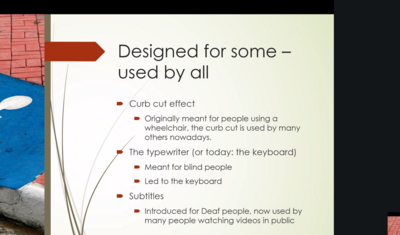

Shifting toward the user perspective, Kirk St.Amant in Cognitive Factors Affecting Content Usability introduced the FACOS model—findability, accessibility, comprehensibility, and operability—to explain how cognitive processes shape usability. He highlighted that effective content must align with both human mental processes and system requirements to be truly usable. At the same time, Lena Krauß and Nadine Brockschmidt in Mastering the Mess: MINDA’s Master Data Makeover illustrated the operational consequences of poor data quality, showing how structured terminology and strong governance can dramatically improve efficiency, reduce costs, and increase consistency.

In AI Fallacies in Documentation, Giuseppe Getto and Jackie Damrau addressed widespread misconceptions about AI adoption, emphasizing that without structure, metadata, and human oversight, automation tends to amplify errors rather than reduce them. Complementing this practical perspective, Manny Silva in Demystifying Docs-as-Code demonstrated how version control, automation, and structured development workflows can significantly improve documentation quality, consistency, and scalability.

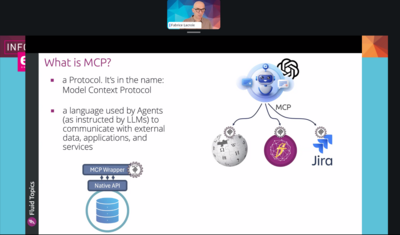

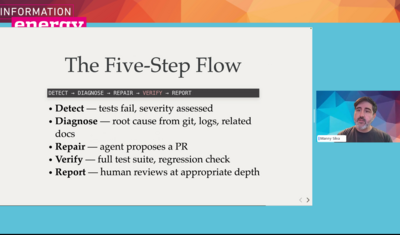

Looking ahead, Michael Iantosca in his keynote AI and the Future of Technical Documentation: From LLMs to Fully Agentive Workflows provided a forward-looking perspective on multi-agent systems that already support documentation processes in practice. He emphasized that while these systems increase automation and autonomy, they still require human governance, positioning technical writers increasingly as knowledge experience engineers who design, supervise, and orchestrate intelligent documentation ecosystems.

The closing panel with Dr. Harald Stadlbauer, Wouter Maagdenberg, Stefanie Amberg, Colin Marklowitz, and David Kavermann reinforced this outlook by framing AI not as a replacement, but as a catalyst for transformation, highlighting that the core value of technical communicators lies in context creation, quality assurance, and orchestration of AI-driven workflows.

Overall, the day made clear that technical communication is evolving into a structured, AI-native discipline where human expertise remains essential for designing systems, ensuring quality, and enabling reliable, scalable knowledge delivery.

From Structured Content to Trusted AI Workflows: Governance, Quality, and Data-Driven Documentation

The second day of the Information Energy conference revolved around a shared overarching theme: how technical communication and knowledge work are being reshaped by AI, and how content must be structured, validated, and maintained so it can reliably serve both humans and machines.

Marianne McGregor in The Prompt Lab: Experiment, Create, Innovate framed prompting as an iterative design process, where AI outputs are continuously refined by identifying missing elements and improving instructions through structured experimentation. Fabrice Lacroix in Your #1 Reader will Soon be an AI – Are you Ready? reinforced the idea that AI agents are becoming primary consumers of content, requiring organizations to unify fragmented knowledge and design information explicitly for machine reasoning.

This shift toward machine-centric content was extended by Suvitha Babu T S in AI-Enabled Content Supply Chains for Responsible, Enterprise-Scale Knowledge Ecosystems, who highlighted that many organizations still struggle with fragmented repositories, inconsistent metadata, and weak governance, leading to unreliable AI outcomes. She proposed a structured model combining design, governance, automation, and assurance to turn content into a reliable, scalable supply chain for responsible AI use.

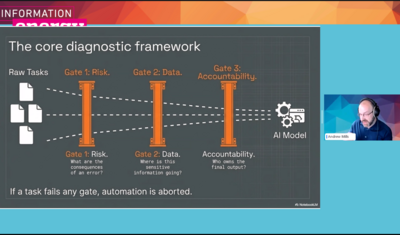

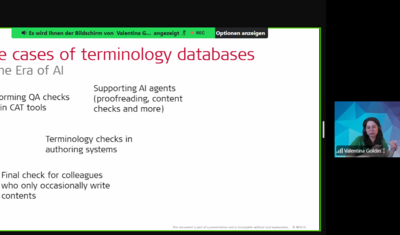

InFrom Can (A)I to Should (A)I?, Andrew Mills emphasized that despite growing automation capabilities, humans must remain accountable for AI-assisted documentation, particularly in high-risk contexts. This makes structured workflows, validation mechanisms, and careful prompt design essential foundations for trustworthy outputs. In a closely related direction, Valentina Goldin’s Well Begun Is Half Done demonstrated how centralized terminology management and integrated systems help ensure consistency at scale, increasingly supported by AI-driven terminology and style assistance.

A shift toward measurable quality was highlighted by Sadhana Suresh in Driving Exploration and Adoption: A Data-Driven Approach to Documentation, where analytics were used to reveal how users actually interact with documentation and where content fails to support discovery or understanding. Complementing this, Rob Hanna in The Content Triforce in 2026 argued that AI readiness depends far less on models and far more on structured content, combining DITA, iiRDS metadata, and microcontent principles to enable precise and reusable information across systems.

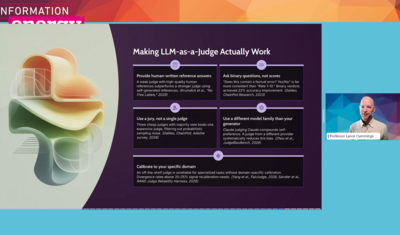

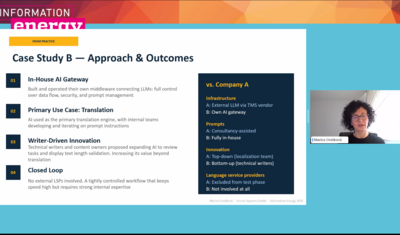

Extending this structured perspective into multilingual environments, Marina Orešković in Localization in Technical Communication with a Touch of AIshowed how AI can significantly accelerate localization workflows, while simultaneously increasing the need for consistent source content, strong data foundations, and clearly defined quality processes. In a methodological extension of this idea, Lance Cummings in Evidence-Based Prompt Design for AI Writing Systems argued that prompts should be treated as testable artifacts, evaluated through structured criteria, information types, and iterative validation rather than intuitive use alone.

Bringing the focus back to the user, PhD Jonatan Lundin in Uncovering Why Users don’t Understand Manualsexplained through cognitive psychology how comprehension depends on mental models, showing that effective documentation must actively support user thinking rather than merely provide information. Building on this human-centered perspective, Toni Byrd Ressaire in Copilot Allies: Content Governance Through Human-Machine Collaboration demonstrated how AI agents can support governance tasks such as reducing duplication and resolving ambiguity, but only when combined with human oversight and clearly defined semantic context layers.

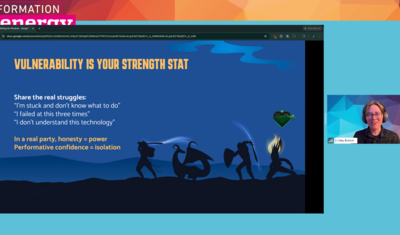

Shifting away from systems toward community, Caley Burton in Rolling for Wisdom, or Why You Should Build a Doc Communityargued that resilience in technical communication is not only a matter of tools and processes, but also of strong professional communities that enable shared learning and reduce isolation in a rapidly changing environment. Finally, Manny Silva in Docs as Tests: A Strategy for Resilient Docs brought many of these themes together by proposing that documentation itself can be treated as executable, testable assertions tied to real product behavior, ensuring continuous validation and a tight alignment between content and reality.

The closing panel discussion with Scott Abel and Dr. Harald Stadlbauer and other industry voices concluded the day by looking toward fully agentive systems capable of perceiving, deciding, and acting with minimal human intervention. The panel emphasized that these systems will only succeed with strong content structure, governance, and orchestration, positioning technical communicators as key designers and stewards of future AI-driven information ecosystems, and closing the day on a clear note that agentic workflows are becoming an immediate and strategic reality rather than a distant vision.

From Documentation to Intelligent Knowledge Ecosystems: Connecting Content, Context, and AI

The third day of Information Energy revolved around a clear overarching theme: From Content Creation to Intelligent Information Ecosystems, where technical communication moves beyond producing documentation toward structuring, connecting, governing, and operationalizing content for AI-driven environments.

The meetup with Kai Weber, Creating Content With or For AI, highlighted that many organizations still struggle with restricted access, security constraints, and fragmented tooling, while practitioners are already experimenting with small, practical AI use cases that gradually shift their role toward orchestrating content workflows rather than producing content manually. Christoph Beenen in Before You Buy a CCMS, Fix Your Mess reinforced this perspective by showing that tools alone do not solve structural problems, and that success depends on aligned processes, clear ownership, and well-managed metadata.

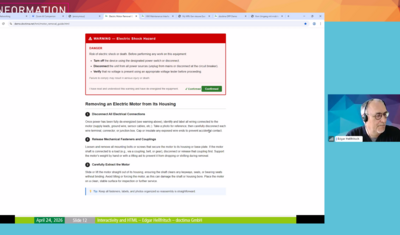

Lara Steinmetz in Multimodality and Accessibility in TDexpanded the scope beyond structure by emphasizing that documentation must also be inclusive and accessible, since poor accessibility directly impacts adoption and increases support effort. Edgar Hellfritsch in Interactivity and HTML – Reasons to (Finally) Abandon PDF further developed this idea by arguing for modular, semantic, and interactive documentation formats that move beyond static PDFs toward connected information experiences.

This evolution toward connected ecosystems was extended by Harald Stadlbauer and Helmut Nagy in iiRDS as a Bridge Between DITA, Markdown and File Based Content, who demonstrated how standards like iiRDS enable interoperability across fragmented systems and, when combined with knowledge graphs and AI, support context-aware information delivery. Frank Wegmann in Exploring the Realm of Metadata in Technical Communication complemented this by positioning metadata as a foundational layer for automation, retrieval, and AI readiness across complex documentation landscapes.

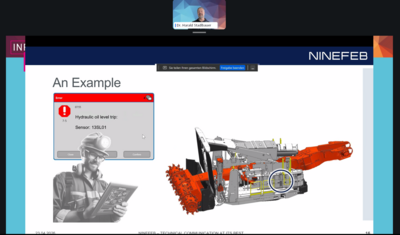

The shift toward operational intelligence became more tangible in Maximilian Gärber’s Agentic AI meets Knowledge Graph, where AI agents combined with structured knowledge were shown to deliver real-time, situation-specific decision support for service technicians. In a similar direction, Hannes Endreß in The AI Trainerdemonstrated how AI can transform technical documentation into adaptive learning systems that personalize content and reduce dependency on human trainers, while Gabriele Buchner, Mike Swain, Sebastian Sappl, and Thomas Meaney in How We Built an LLM-Powered App for User Assistance Experts at SAP showed how such concepts can already be implemented in production environments through integrated AI tools with human-in-the-loop quality control.

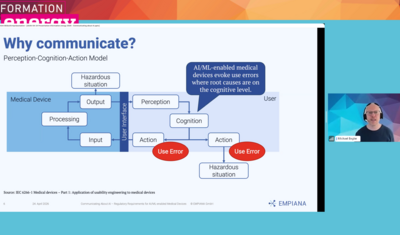

Adding an important boundary perspective, Michael Engler in Communicating About AI – Regulatory Requirements for AI/ML-enabled Medical Devices emphasized that as AI becomes embedded in products, documentation must clearly communicate limitations, risks, and regulatory requirements to ensure safe and responsible use.

Overall, the third day illustrated a consistent direction across all sessions: technical communication is evolving into the design of intelligent, structured, and governed information ecosystems, where content is not only created, but connected, contextualized, and operationalized for both human users and AI systems.

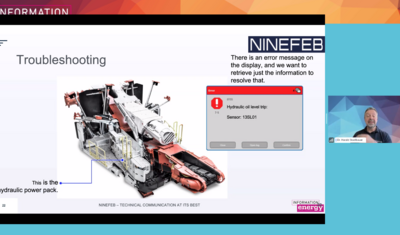

Industry Insights and Future Directions

The conference also explored some of the most pressing topics in information technology and documentation. Discussions on automation, AI, and the evolving role of technical communicators provided a clear picture of where the industry is headed.

Keynotes and panels explored how new technologies are reshaping the way content is created, managed, and distributed. Experts discussed the growing importance of AI and machine learning in streamlining documentation processes while also addressing the challenges of maintaining quality and consistency across platforms.

To conclude the first day, the closing panel discussion with Dr. Harald Stadlbauer, Wouter Maagdenberg, Stefanie Amberg, Colin Marklowitz, and David Kavermann brought together many of the day’s themes by discussing whether AI represents a threat or an opportunity for technical writers, ultimately framing it as a powerful driver of transformation rather than replacement. The panel highlighted that while AI can automate parts of content production, the core value of technical communicators lies in context creation, quality assurance, and cross-functional collaboration, leading to evolving roles such as knowledge engineers and AI orchestrators. This discussion set a positive tone for the future, emphasizing a shift toward more strategic and impactful responsibilities within AI-driven documentation ecosystems.

Closing Thoughts: A Truly Memorable Experience

Information Energy 2026 was a resounding success, offering attendees a valuable platform to learn, network, and engage with thought leaders from the technical documentation and IT industries. It not only provided valuable content but also fostered a sense of community among professionals from around the world.

Looking back on this exciting event, it’s clear that the future of technical communication is full of opportunities. With new technologies on the horizon and a growing focus on automation and efficiency, the field continues to evolve. The conference highlighted the innovation and adaptability of the community, and we look forward to the next opportunity to come together and share in these exciting developments. And behind the scenes we had a lot of fun. We hope you enjoyed it, too!